How Netrinos reaches LAN devices

NAT projection. A new approach to LAN access, compared to subnet routing and L2 bridging.

The three flavours

Netrinos is an overlay network. Devices get addresses in a shared logical address space layered above the physical internet, with peer-to-peer traffic tunneled between them. Same category as Tailscale, ZeroTier, NetBird, and similar.

Most overlay networks in this category share the same foundation: each peer gets a private mesh IP from 100.64.0.0/10 (the CGNAT range), and peers reach each other directly across an encrypted overlay. They diverge in how traffic reaches beyond the mesh, into a customer LAN, a printer, a NAS, an ISP modem.

- L3 subnet routing. Tailscale, NetBird, Headscale, and others. A mesh node announces a LAN's CIDR; other members reach devices through that gateway at the network layer.

- L2 bridging. ZeroTier and similar. Peers join a shared broadcast domain and exchange Ethernet frames including ARP, DHCP, and multicast.

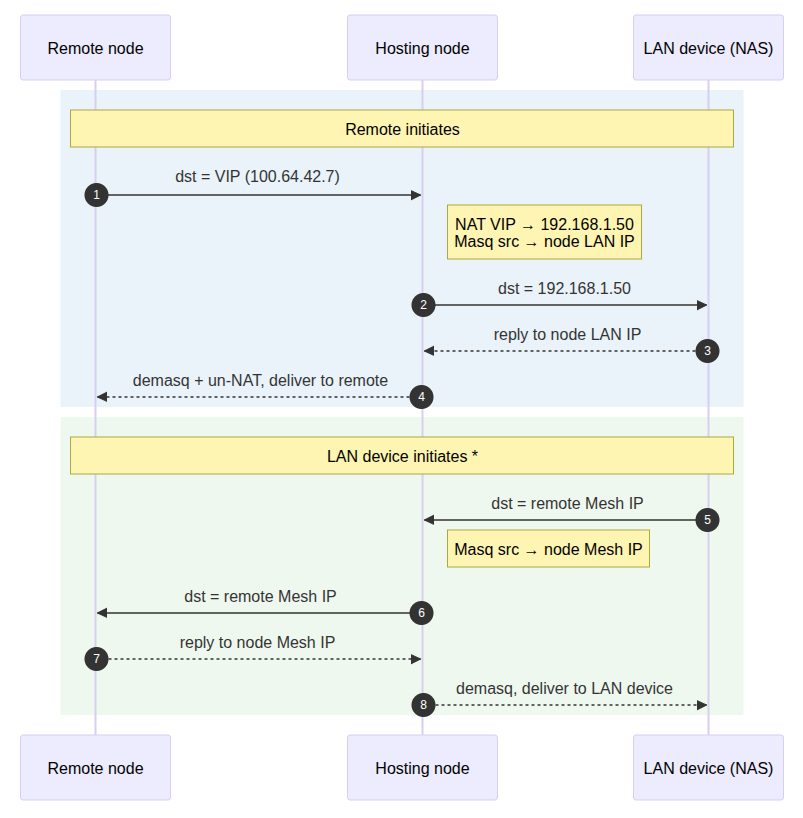

- NAT projection. Netrinos. A node assigns a routable mesh IP to a non-mesh device and 1:1-NATs traffic between the mesh IP and the device's LAN address. Each projected device appears as a first-class mesh member with its own mesh-side address, regardless of how the LAN behind it is numbered.

The Netrinos architecture is specifically designed to accommodate the non-ideal real-world networks found in SMB, residential, and multi-site deployments.

The Netrinos approach

Mesh IPs

Every Netrinos node is allocated a Mesh IP by the Netrinos hub: a /32 in the 100.64.0.0/10 CGNAT range. Nodes reach each other at their Mesh IPs, always encrypted end to end, with the hub coordinating connection setup. Nodes are also given DNS names in real DNS hierarchy (e.g. dev1.acme.2ho.ca), so they're addressable by name as well as by Mesh IP.

Virtual IPs

A node can also host one or more Virtual IPs (VIPs). A VIP is a Mesh IP that 1:1-NATs to a target LAN device's real address (192.168.x.x/32), with its own DNS name (e.g. nas1.acme.2ho.ca).

Each VIP exposes exactly one LAN device. Anything else on the same LAN stays unreachable.

Initiate to a VIP, and the response traffic returns automatically. A node's own Mesh IP and a VIP are both Mesh IPs, addressed and routed the same way.

* If you want a LAN device to be able to initiate to a Mesh IP, you just have to add a static route to send 100.64.0.0/10 traffic to the Netrinos node.

Both endpoints see what looks like a normal conversation in their own address space. The hosting node is the only piece that knows about the translation.

Properties of the model

- VIPs target LAN devices. Internet and external destinations use Gateways and Routing, separate features. VIPs are the primitive for reaching specific LAN devices.

- One node hosts many VIPs. A node sitting on a LAN can publish access to a NAS, a printer, a controller, the router admin interface, and the ISP modem, all under separate VIPs with separate DNS names.

- VIPs reach non-adjacent devices too. The target doesn't have to be on the hosting node's directly-attached LAN. Any device the node can reach qualifies, including across internal routing.

- Multiple VIPs can target the same device. From different nodes, with different DNS names.

nas.acme.2ho.caandnas-bk.acme.2ho.caboth reach the same NAS via two hosting nodes for redundancy. - Access is per-VIP. Devices reachable through Netrinos are exactly those with Mesh IPs or VIPs assigned. There is no broader "the LAN" to whitelist your way into. Access is granted device by device, by name.

- Automatic allocation. The hub assigns Mesh IPs and DNS names when a node registers or a VIP is created. No prefix planning, no external lookup tables.

- DHCP reconciliation by MAC. The hosting node identifies the target by MAC. If the device's LAN IP changes, the node detects the change and updates the VIP-to-target NAT mapping automatically. The VIP and its DNS name stay stable through site-side network drift.

- Port forwarding. The Netrinos Edge edition also supports conventional port forwarding. Port forwarding is more complex to manage due to the extra layer of abstraction. In most cases VIPs provide a better solution.

What Netrinos does not do, and why

- No subnet routing. Customer LAN prefixes are not advertised into the mesh. The LAN's address space stays local to the hosting node. This is what avoids subnet conflicts between customer sites.

- No bridging. LANs are not joined to the mesh as a single broadcast domain. ARP, mDNS, broadcast, and multicast (ONVIF/WS-Discovery, SSDP, NetBIOS) cannot survive the NAT translation. This is what prevents default LAN exposure: devices are reachable only through explicit VIPs.

Traditional mesh VPN architectures

All mesh networks implement some kind of overlay for peer-to-peer reach. The two traditional techniques diverge in how they extend that overlay to devices on the LAN. L3 subnet routing and L2 bridging are valid designs that solve slightly different problems.

Layer 3 subnet routing

Examples. Tailscale, NetBird, Headscale, and others.

Each peer gets a private mesh IP from 100.64.0.0/10 (the CGNAT range, also used by Netrinos). Peers reach each other directly on the mesh.

What sets this approach apart is subnet routing. To reach a LAN, one node on that LAN runs as a "subnet router" or "egress gateway" and advertises the LAN's prefix (e.g. 192.168.1.0/24) into the mesh. Once advertised, the LAN's address space is part of the mesh's routing table. A remote peer addressing 192.168.1.50 is routing that literal address across the mesh, with the subnet router forwarding the packet onto the physical LAN.

This is the source of the subnet-conflict problem. Two LANs both on 192.168.1.0/24 produce two routes to the same prefix in the mesh with no way to disambiguate, which is why 4via6 exists and why per-site prefix planning becomes operational overhead.

The architecture works, but is broad by default. Once the prefix is advertised, the entire LAN is reachable through the mesh. Scoping that down is policy work on top, with ACLs maintained per site.

Layer 2 bridging

Examples. ZeroTier and similar.

The mesh is a virtual Ethernet switch. Peers join a shared broadcast domain, get IPs from a configured subnet (you choose it, not CGNAT-imposed), and exchange Ethernet frames including ARP, broadcast, and multicast. Discovery protocols that depend on link-local broadcast (mDNS, WS-Discovery, SSDP) work on the virtual segment.

What the physical LAN sees: nothing, by default. The L2 mesh is its own segment, separate from the physical Ethernet. To extend the mesh onto an actual LAN you have to configure bridge mode at a node, joining the virtual segment to the physical Ethernet. That works for one site. Repeat it for two sites both on 192.168.1.0/24 and the bridge collapses into duplicate-IP conflict.

The architecture is elegant for single-site or non-overlapping-segment deployments. It does not extend gracefully to the multi-site SMB reality where overlap is the default.

How each architecture handles the multi-site reality

Two scenarios that test whether an architecture survives the real-world use case. The Netrinos answer to each is already laid out above; this section walks through how the other two architectures handle them.

Overlapping subnets

SMB sites commonly use the default 192.168.1.0/24 range (or similar) out of the box, so any deployment spanning multiple sites is likely to encounter overlapping subnets.

L3 subnet routing. Tailscale's real solution is 4via6. Assign each conflicting v4 subnet a unique IPv6 prefix; the subnet router translates v6 to v4 at the gateway. From the client side, you reach 192.168.1.50 in site A's LAN via a v6 address that encodes both site A's prefix and the underlying v4 host. It works. It also requires per-site prefix planning, IPv6 plumbing end-to-end, and configuration depth that adds friction at every on-site visit. NetBird handles overlapping subnets via a route-selection model (the client picks which overlapping route to use) rather than address translation. The mechanism differs but the per-site setup overhead is comparable.

L2 bridging. Bridging two physical 192.168.1.0/24 LANs onto a single virtual segment fails for the same reason any L2 bridge with overlapping addresses fails. Duplicate IPs, ARP chaos.

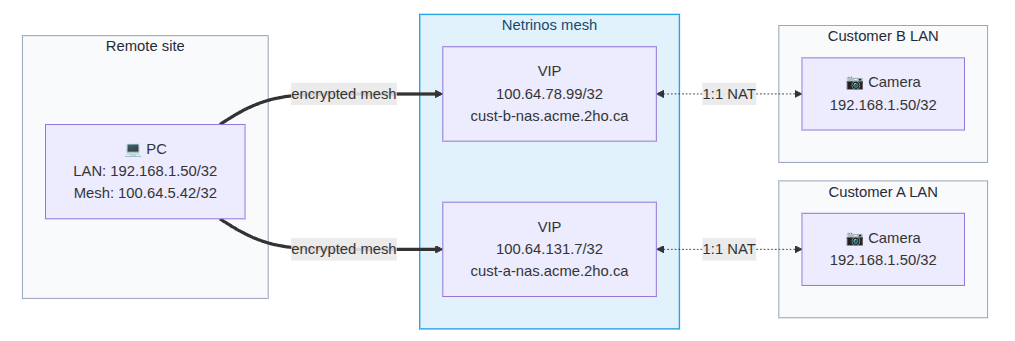

Netrinos VIPs. Each site's hosting node owns its own NAT mapping. Site A's 192.168.1.50 is exposed via VIP 100.64.42.7 (nas1.acme.2ho.ca). Site B's 192.168.1.50 is exposed via VIP 100.64.37.21 (host2.acme.2ho.ca). The two VIPs are unique addresses in the mesh and never share a routing table. The LAN address overlap is invisible to the mesh.

Maintaining access control

How much of each LAN is reachable by default, and how much ongoing effort does it take to scope that down?

L3 subnet routing. Once the subnet router advertises a LAN prefix, the entire prefix is reachable from the mesh by default. Whether the LAN can initiate to the mesh in return is a configuration choice, and an important exposure that must be managed correctly across every site. A compromised peer can guess IPs in the advertised range and probe them, reaching any device on the prefix. From there it can pivot. Limiting that requires ACL rules on every subnet router at every site, and those ACLs are policy on top of a fundamentally broad capability. The default is wide; tightening it is ongoing work.

L2 bridging. Bridged broadcast domains carry traffic both ways. A compromised peer joins the segment, can ARP-scan, broadcast-attack, and reach anything it carries. Where physical LANs are bridged in, that exposure extends to the bridged side. It's the same problem at a different layer.

Netrinos VIPs. Each VIP is a one-way pinhole to a single device. The remote initiates, response returns automatically, but the LAN has no path back into the mesh by default. The whole LAN is never reachable through any VIP, only the specific device the VIP targets. A compromised remote reaches exactly the VIPs it was granted, with no way to scan, probe, or pivot. Finer policy can sit on top, but it's not load-bearing. The architecture is already narrow.

Routed and bridged architectures are powerful and require lockdown. Netrinos is contained by default and adds policy where needed.

4via6 and the integrator's tool chain

4via6 is Tailscale's mechanism for handling overlapping v4 subnets. Each conflicting v4 prefix is assigned a unique IPv6 prefix; the subnet router translates between v6 and v4 at the site side. From the integrator's laptop, devices are addressed by synthesised v6, e.g. fd7a:115c:a1e0:b1a:0:1:c0a8:0132 (site 1, host 192.168.1.50, encoded).

The v6-readiness requirement shifts to the integrator's tool chain. Browsers, ssh, and modern protocol clients handle v6 cleanly. Many vendor SDKs and management apps in surveillance and building-automation, including older Windows utilities for cameras, NVRs, and access controllers, have limited or no IPv6 support, which can prevent them from addressing 4via6 endpoints reliably.

4via6 also requires per-site IDs and per-subnet-router configuration. Each conflicting v4 prefix is mapped to a unique v6 prefix, tracked in an external lookup table, and updated when LANs renumber. For 30 sites, this is 30 site IDs and 30 subnet-router configurations.

Netrinos addresses devices via DNS names (e.g. camera1.acme.2ho.ca) or by VIP. Both resolve to v4 addresses in 100.64.x.x. The v4-only tool chain addresses devices without translation, and there are no site IDs or v6 prefixes to allocate. The Netrinos hub allocates Mesh IPs and DNS names automatically when nodes register and VIPs are created. There's no external lookup table, no per-site IDs, no prefix planning to maintain across the fleet.

Closing

Three architectures, three sets of tradeoffs. Subnet routing puts the LAN's address space into the mesh's routing table. Bridging extends the broadcast domain across nodes. NAT projection translates per-device at the gateway. Which one fits depends on the deployment shape.

Netrinos is built for real-world non-enterprise scenarios. SMB sites, residential properties, and multi-site deployments where overlapping subnets are routine, ACL maintenance has to scale across many sites, and the customer's network can't be redesigned. NAT projection sidesteps the operational costs of subnet routing and bridging by design.

Operationally, the architecture is low-maintenance by design. The hub auto-allocates Mesh IPs and DNS names. DHCP reconciliation by MAC keeps VIP mappings stable through customer-side network drift. Access control is structural. Only devices with VIPs are reachable, so the default reach is already narrow without any per-site ACL maintenance.